A pair of landmark court cases found Meta and YouTube guilty last week of harming young users by designing algorithms that were addictive and led to mental health distress. The damages assessed against the companies amounted to a fraction of a percent of their annual earnings. The long-term implications, however, could be far more significant.

The rulings found that programmed algorithms are not protected by Section 230, the federal law that shields social media companies from liability for user-posted content. That represents a crack in a legal defense these companies have relied on for years. And thousands of similar cases are already pending.

Section 230 has been under scrutiny for some time. Lawmakers have repeatedly called for its repeal, though efforts so far have failed to gain traction. Many in Congress appear to view the threat of repeal as leverage, hoping it will push tech companies to negotiate changes that reflect how the internet has evolved since the law was passed.

“Section 230 was created during the early advent of the internet, when lawmakers were trying to give emerging online companies room to innovate and experiment with technologies the public and policymakers barely understood,” says J.B. Branch, AI Governance and Technology Policy Counsel at Public Citizen. “It was never intended to operate as a permanent legal shield for some of the most powerful corporations in the world.”

Reframing the argument

Has Section 230 lost its protective power? Not yet.

The core premise of the law still holds: companies are not liable for user-generated content. What has changed is how plaintiffs can work around that protection. The new cases focus less on what users post and more on how platforms are designed.

In other words, product design may be the greater legal vulnerability.

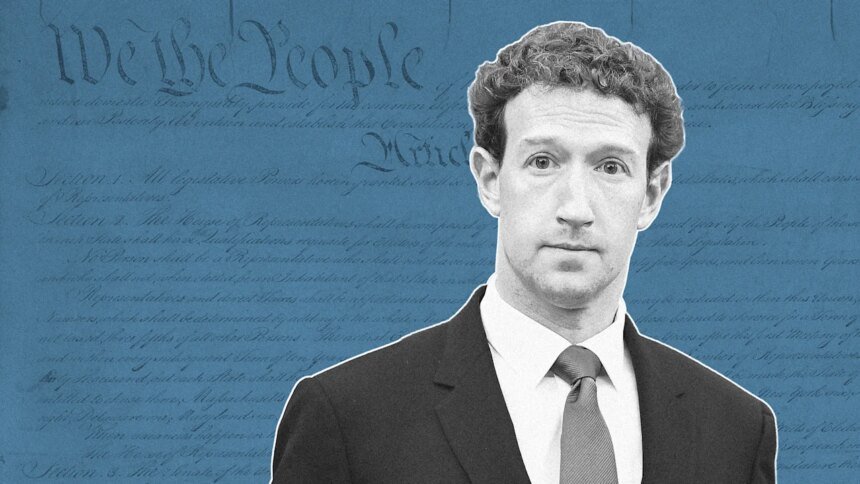

“CEOs like Mark Zuckerberg, Tim Cook, and Evan Spiegel have to rethink how they design products that kids use because they can no longer hide fully behind Section 230,” says Sarah Gardner, CEO of Heat Initiative, an organization focused on online safety for children.

That shift assumes the rulings survive appeals. Meta and YouTube are expected to challenge the decisions, likely setting up a years-long legal battle that could ultimately reach the Supreme Court. Even so, the broader debate has already begun.

Forced accountability

The implications are significant, particularly when it comes to younger users. The rulings push companies toward a level of accountability that, in some ways, mirrors the trajectory of the adult entertainment industry.

It’s an imperfect comparison, but there are parallels, says Ramnath Chellappa, a professor at Emory University’s Goizueta School of Business. Adult sites have increasingly been required to verify users’ ages. Similar mechanisms could emerge for social media.

“The mechanism for monitoring … to ensure that a minor is a minor and so on is already a very complex topic,” he says. “What does that involve? Does that involve a third party, or does one need to share their driver’s license information?”

Lexi Hazam of Lieff Cabraser Heimann & Bernstein, LLP, co-lead of the Social Media MDL, agrees the rulings could force major operational changes, though she stops short of drawing a direct comparison.

“The implications are significant and show these tech giants that no company is above accountability when it comes to our children,” she says. “The companies will have to reassess how they design and operate their platforms moving forward … potentially requiring the companies make real changes, including safer platform design, effective age verification, and parental controls that actually work to protect young users.”

Not everyone sees weakening Section 230 as beneficial. Critics argue the current debate overemphasizes harms while overlooking benefits.

“Public debate about social media and youth mental health focuses almost exclusively on potential harms,” wrote the International Center for Law & Economics’ Ben Sperry and Sabrina Pekarovic in a recent essay, arguing that this emphasis downplays the ways platforms can enable self-expression and connect teens to broader communities. They add that treating all teenagers as equally vulnerable oversimplifies the issue and isn’t supported by the evidence. “Blanket bans assume that all teenagers face similar risks and should be treated alike,” they wrote. “The evidence suggests otherwise.”

A Big Tobacco moment

Some observers have compared the rulings to a “Big Tobacco” moment for social media, a long-awaited reckoning that could lead to sweeping regulation.

That could include changes to Section 230 or a broader overhaul of how platforms operate. Either outcome would carry major financial consequences for companies that have long been dominant players on Wall Street.

The potential impact on investors is substantial. One report from the Computer and Communications Industry Association estimates that repealing Section 230 could cost investors $2.2 trillion and lead to roughly 1.1 million lawsuits per year against digital service companies.

Some analysts believe the direction is already clear.

“The consequences for social networks will be devastating,” says Igor Pejic, a tech investing strategist and author of Tech Money. “Regulation will escalate as it did with the tobacco industry and one day we might see things like required ID authentication. This regulatory trend will not kill social media, but I believe that in a couple of years they will at least lose their status as a Big Tech company.”